Using LLMs to Translate User Stories Directly Into Test Cases

User stories are often written with minimal detail, just enough to move development forward. This approach works when the team shares context, but it creates problems when new members join or QA needs to write test cases without that background. Much of the work relies on undocumented assumptions, edge cases, and exceptions. The key question becomes: are stories written to enable feature delivery, or to ensure testability? Using a generative AI test can help identify gaps, clarify missing scenarios, and make requirements more reliable.

Contents

Why Testability Depends on How Stories Are Written?

Stories describe functionality, but not always in a way that supports testing. They outline what the user wants to do, but skip how the system should respond, what inputs are expected, or what constraints apply.

These gaps can be filled in through experience or discussion. But when LLMs are involved, missing details surface immediately. The model doesn’t assume. It can only work with what’s written.

That’s where testability begins to depend on story quality. Stories that are specific, structured, and concrete produce clearer, more complete test outputs. Stories that rely on shared team memory or vague intent don’t.

Some things to remember while writing stories with testability in mind are:

- Negative paths (what happens when things go wrong?)

- Field-level rules (what inputs are valid, invalid, or optional?)

- Contextual boundaries (when should this feature not be available?)

- Cross-feature dependencies (what else could this impact?)

The Manual Testing Bottleneck

Manual testing takes time. It is slow because it starts with missing information. Which is why QA has to take a step back.

The feature’s built, technically, but not test-ready. Someone’s still figuring out what the edge cases are. Or asking what happens when a field is left empty. All these things are happening in the background, but deadlines don’t wait for things to settle down. So testing gets squeezed, and a few cases are skipped.

Manual testing isn’t slow because people are slow. It’s slow because the work starts late. And it starts late because the story wasn’t built to be tested; it was built to be built.

How LLMs Translate Stories Into Test Cases?

LLMs predict patterns based on the input they receive. When given a user story, they look for structure, expected behaviors, and implied logic, and then try to generate test cases that match. A generative AI test works in the same way, it doesn’t “understand” context like humans, but mirrors the quality of what’s written.

LLMs don’t “understand” the story like humans. They don’t ask follow-up questions or look for missing context. They simply respond to what’s written. If the story clearly defines inputs, outputs, conditions, and exceptions, the model generates structured test steps. But if the story is vague or open-ended, the test output reflects that.

LLMs work by recognizing structure within the language they’re given. When a user story is written clearly, the model breaks it down into a few basic components:

- Actor: Who is performing the action

- Action: What they’re trying to do

- Intent: Why they’re doing it, or what outcome they expect

This basic structure helps the model understand the flow of the story and generate meaningful test cases. The LLM picks up on patterns naturally, as long as the language is clear enough. It suggests tests that cover functionality, UI elements, validation rules, and even access control or security behaviors.

Take this story, for example:

“As an admin, I want to add new users with role-based access.”

A well-trained model turns that into a set of practical test cases, such as:

- Confirm that the admin dashboard includes an “Add User” button

- Check that only users with admin rights can access this feature.

- Verify the presence of a dropdown or selector for assigning roles.

- Test that role-based permissions are enforced after user creation

- Validate that user input fields (like email or username) have proper validation.

- Confirm that a welcome or onboarding email is triggered after creation.

- Attempt to add a duplicate user and check for appropriate error handling.

Benefits of LLM-Driven Test Case Generation

When a user story is written clearly, LLMs turn it into test cases in seconds. You don’t have to write everything from scratch or wait until the dev work is done. You can check the story, see what kind of tests show up, and decide what’s missing before things move forward.

LLMs make things easier through:

- You don’t start from zero: The model gives you a basic structure to work with, so you don’t have to write every test case by hand. That is enough to unblock the process. You will be reviewing, editing, and improving what’s already there.

- The output follows a pattern: LLMs stick to patterns. If different people write the stories with different tones or formats, the test cases that come out follow a steady structure. That consistency helps, especially when there’s a lot to go through.

- Testers get more time to focus on what matters: LLMs write basic test steps, so testers don’t have to waste time on repeat work. They can spend that time thinking about edge cases and weird flows. The model does the boring part so that testers can do the important part.

- Test and story stay closer together: When test cases are pulled directly from the story, they don’t drift off. They stay tied to what was written. That makes it easier to see if something’s missing or off. And when the story changes, you know exactly which test to look at. You’re not chasing things across docs or guessing what connects to what.

- Helps teams align without extra meetings: Sometimes, devs, testers, and product managers read the same story and imagine completely different things. When you run that story through an LLM and look at the test cases it generates, it gives everyone a common starting point. It keeps everyone on the same page without adding more to the calendar.

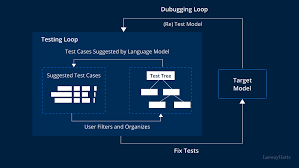

Using LambdaTest Alongside LLMs

LLMs break down a user story into testable parts. You give them a line like “As a user, I want to reset my password,” and they’ll turn it into checks. You get structure and a base to work from. But the job’s only half done. Knowing what to test isn’t the same as knowing whether it works everywhere it needs to.

LLMs provide the structure and base test cases, while LambdaTest gives teams access to hundreds of browser and device combinations to execute those tests without setting up anything manually.

LambdaTest is a GenAI-native test execution platform that supports manual and automated testing at scale across 3,000+ browser and OS combinations. With features like automated visual testing, teams can validate both functionality and UI consistency across environments. This means LLM-generated test cases can be executed where they matter most, removing guesswork and providing immediate visibility into how a story behaves in different environments.

There’s more to it than just writing and running tests. LLM-generated test cases can be reused across future projects as well. That means teams don’t have to start from scratch every time they need to run regression tests. And when those tests are run early, teams get faster feedback. QA doesn’t have to wait on staging, and devs don’t have to wait on QA.

So the LLM helps define the “what,” and LambdaTest helps validate the “how” and “where.” You still need smart people writing good stories and checking edge cases, but the workflow itself becomes smoother, faster, and easier to trust.

Challenges of Using LLMs for Test Case Generation

LLMs rely heavily on the input they’re given and don’t always catch what experienced testers would notice right away. Before fully depending on them, you should understand some of their limitations.

- Vague details in user stories: LLMs work only with the information they’re given. If a story doesn’t clearly explain the user’s goal, expected behavior, or conditions, the generated test cases end up too broad or miss important checks altogether.

- Over-reliance on generated output: The suggestions from an LLM should be a starting point, not a final list. Human review is still needed to catch edge cases, system integrations, and rules specific to your product.

- Not all tests are easily automatable: Some outputs look good on paper but require specific data setups or manual steps. Teams still need to decide which test cases are automation-ready and which aren’t.

- Lack of Full Product Context: Not everything a tester needs is written down. Sometimes key details live in someone’s head, in old Slack threads, or buried in earlier tickets. LLMs don’t have access to that extra context unless it’s included in the prompt. So even well-written stories lead to test cases that miss how the feature works in the product.

Conclusion

LLMs take a lot of the weight off when it comes to writing test cases. They turn user stories into structured checks, bring clarity early in the process, and help teams move faster without skipping steps. Running these through a generative AI test setup ensures gaps are surfaced quickly, while tools like automated visual testing validate consistency at scale.

This changes the shape of QA work. Less time is spent repeating the basics, and more time goes into thinking through what could go wrong.

Teams still need judgment, product knowledge, and the ability to ask the right questions. But with the right setup, those strengths aren’t buried under setup work. They get to show up where they’re needed most.